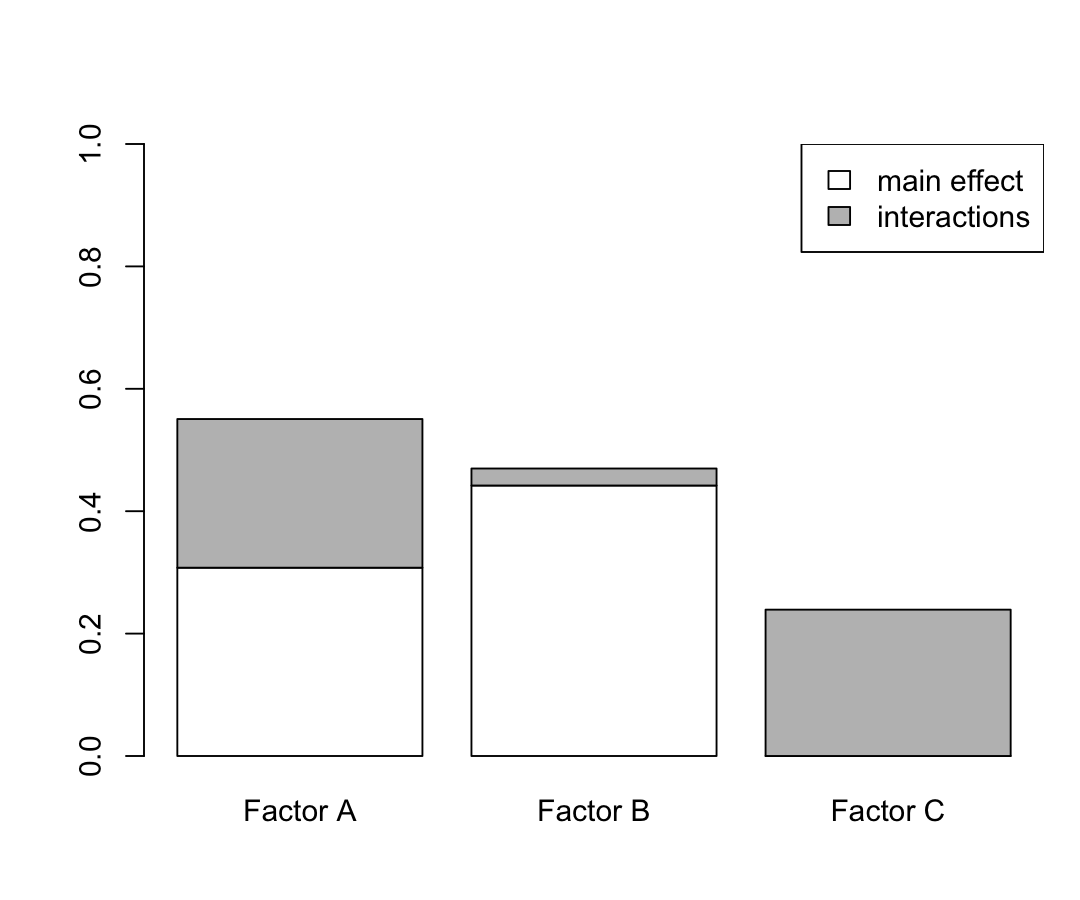

class: center, middle, inverse, title-slide # Applying a Global Sensitivity Analysis Workflow to Improve the Computational Efficiencies in Physiologically-Based Pharmacokinetic Modeling ## <hr /> ### <strong>Nan-Hung Hsieh, PhD</strong> ### .small[Postdoctoral Research Associate] --- class: center, middle .xxxlarge[ **Why We Need to Do .bolder[Computational Modeling] in Toxicology?** ] ??? I think most people here are very experienced in laboratory experiments like in-vivo or in-vitro. But I have no idea how many people understand why we need to do computational modeling in our study. So I used this question in the beginning. --- class: center, middle .xxlarge[ .xxxlarge[ .bolder[**Prediction**] ]] ??? We want to use this mathematical or statistical model to make some prediction with experimental data. Some people use the model to do "in-vitro in-vivo extrapolation". Some people use the model to estimate the chemical cumulation in the animal body and predict health effect. Also, we can integrate the chemical exposure and health effect to assess human health risk. --- # Pharmacokinetics (PK) .large[ - The fate of chemical in a living organism - .bolder[ADME] process .center[ .bolder[A]bsorption - How will it get in? .bolder[D]istribution - Which tissue organ it will go? .bolder[M]etabolism - How is it broken down and transformation? .bolder[E]limination - How it leave the body? ] ] ??? Now, we can move on to the today’s first topic. Some people here already know pharmacokinetic. It is used to describe the fate of the chemical in a living organism when chemical enter into the animal body. --- background-image: url(https://image.ibb.co/jNZi4e/drug_concentration_time.png) background-size: 150px background-position: 80% 98% # Pharmacokinetics (PK) .large[ - The fate of chemical in a living organism - .bolder[ADME] process .center[ .bolder[A]bsorption - How will it get in? .bolder[D]istribution - Which tissue organ it will go? .bolder[M]etabolism - How is it broken down and transformation? .bolder[E]limination - How it leave the body? ] ] .large[ - Kinetics: rates of change - PK is focus on .bolder[TIME (t)] and .bolder[CONCENTRATION (C)] ] ??? Kinetics is a branch of chemistry which describe the change of one or more variables as a function of time. So we focus on the time and concentration. It’ll go up after intake the drug and then go down after reach the maximum concentration. --- # Pharmacokinetics Model To .large[.bolder["predict"]] the **cumulative does** by constructed **compartmental model**. .pull-left[ <img src="http://www.mdpi.com/mca/mca-23-00027/article_deploy/html/images/mca-23-00027-g006.png" height="140px" /> ] .pull-right[ <img src="https://image.ibb.co/nJTM7z/fitting.png" height="140px" /> ] `\(D\)`: Intake dose (mass) `\(C\)`: Concentration (mass/vol.) `\(k_a\)`: Absorption rate (1/time) `\(V_{max}\)`: Maximal metabolism rate `\(K_m\)`: Concentration of chemical achieve half `\(V_{max}\)` (mass/vol.) `\(k_{el}\)`: Elimination rate (1/time) ??? But it’s difficult to realize the whole time-concentration relationship due to it will take a lot of time and money in the experiment. Therefore, we need to use toxicokinetic modeling. The basic model is constructed by a single variable with few parameters. We can set up input dose and define the output such as chemical concentration in blood or other tissue organs. Then, we can set up the parameters such as absorption,elimination rate constant. We can also used Michaelis-Menten kinetics constants to define the concentration-dependent rate constant for metabolism. --- ## Physiologically-Based Pharmacokinetic (PBPK) Model Mathematically transcribe .bolder[physiological] and .bolder[physicochemical] descriptions of the phenomena involved in the complex .bolder[ADME] processes. .shrink[  ] .footnote[Img: http://pharmguse.net/pkdm/prediction.html] ??? But the real world is not as simple as we think. Sometimes we need to consider the complex mechanism and factors that can help us make a good prediction. So people developed the PBPK that include physiological and physicochemical parameters that correspond to the real-world situation and can be used to describe the complex ADME processes. It can be applied to drug discovery and development and risk assessment. --- class: center # Population PBPK Model .large[ Characterizing PK parameters for populations Evaluating variability and uncertainty Cross-species comparisons of metabolism ]  .footnote[[Chiu et al. 2009. https://doi.org/10.1016/j.taap.2009.07.032](https://www.sciencedirect.com/science/article/pii/S0041008X09003238#fig3)] ??? The advantage of PBPK model is we can use this model to make additional application such as population PBPK modeling. --- class: center, middle .xxlarge[  **Population PK Data** <i class="fa fa-arrow-up"></i> <i class="fa fa-question"></i> <i class="fa fa-arrow-down"></i> **Population PBPK Model**  ] ??? We have population PK data and we also construct the PBPK model. So, the next step is to apply our model to make the prediction with our data. --- ## Bayesian hierarchical modeling **Model calibration** .center[ Prior knowledge (expertise or historical data) <img src="https://image.ibb.co/fATCee/Screen_Shot_2018_09_03_at_10_19_27_AM.png" height="80px" /> <i class="fa fa-arrow-down"></i> .bolder[Markov Chain Monte Carlo algorithm] <i class="fa fa-arrow-down"></i> Posterior knowledge <img src="https://image.ibb.co/fQuKQK/Screen_Shot_2018_09_03_at_3_32_28_PM.png" height="80px" /> ] ??? Usually, we used the Bayesian hierarchical modeling approach to do the model calibration. Before we apply this method, we need to have the prior knowledge for the model parameters. This parameters usually have wide range, rough and low precise estimation. Then we use the MCMC algorithm with experiment data to find the precise estimation of model parameters. -- .left[**Model validation**] .center[ Compare the result of **"in-silico simulation"** and **"in-vivo observation"** ] --- class: middle # Tool (software) ## [GNU MCSim](http://www.gnu.org/software/mcsim/) .large[ - Design and run statistical or simulation models (e.g., differential equations) - Do parametric simulations (e.g., sensitivity analysis) - Perform Monte Carlo (stochastic) simulations .highlight[ - Do Bayesian inference for hierarchical models through .bolder[Markov Chain Monte Carlo] simulations ] ] ??? To do this model calibration, we need to have a tool to help our research. --- # Challenge .large[Currently, the **Bayesian Markov chain Monte Carlo (MCMC) algorithm** is a effective way to do population PBPK model calibration. ] .xlarge[.bolder[**BUT**]] ??? But, we have a challenge in our calibration process. -- .large[ This method often have challenges to reach **"convergence"** with acceptable computational times .bolder[(More parameters, More time!)] ] .right[] --- class: middle, center ## Sometimes, the result is bad ...  --- class: center, middle .xxxlarge[**Our Problem Is ...**] -- </br> .xxxlarge[**How to Improve the .bolder[Computational Efficiency]**] --- # Project Funder: **Food and Drug Administration (FDA)** Project Start: **Sep-2016** Name: **Enhancing the reliability, efficiency, and usability of Bayesian population PBPK modeling** ??? Based on these reasons, we started this project. -- - Specific Aim 1: Develop, implement, and evaluate methodologies for parallelizing time-intensive calculations and enhancing a simulated tempering-based MCMC algorithm for Bayesian parameter estimation (.bolder[Revise algorithm in model calibration]) -- .highlight[ - Specific Aim 2: Create, implement, and evaluate a robust Global Sensitivity Analysis algorithm to reduce PBPK model parameter dimensionality (.bolder[Parameter fixing]) ] -- - Specific Aim 3: Design, build, and test a user-friendly, open source computational platform for implementing an integrated approach for population PBPK modeling (.bolder[User friendly interface]) --- # Proposed Solution - Parameter Fixing .large[We usually fix the "possible" non-influential model parameters through **"expert judgment"**.] .xlarge[.bolder[BUT]] ??? Actually, we already applied the concept of parameter fixing to improve the computational time. But, This is why we need an alternative way to fix the unimportant parameter. So we chose sensitivity analysis. -- .large[This approach might cause .xlarge[**"bias"**] in parameter estimates and model predictions.] .pull-left[ .center[] ] .pull-right[ <img src="https://njtierney.updog.co/gifs/narnia-dart-throw.gif" height="240px" /> ] --- class: middle # Sensitivity Analysis </br> .xlarge[ > Sensitivity analysis (SA) is "the study of how the uncertainty in the output of a model can be apportioned to different sources of uncertainty in the model input." > -- [Andrea Saltelli]((http://www.andreasaltelli.eu/file/repository/intro_v2b) ] .footnote[[Saltelli A., 2002, Sensitivity Analysis for Importance Assessment, Risk Analysis, 22 (3) 1-12.](http://www.andreasaltelli.eu/file/repository/intro_v2b)] ??? This is a brief definition of sensitivity analysis. --- # Why we need SA? -- .xlarge[.bolder[Parameter Prioritization]] </br> </br> .xlarge[.bolder[Parameter Fixing]] </br> </br> .xlarge[.bolder[Parameter Mapping]] --- # Why we need SA? .xlarge[.bolder[Parameter Prioritization]] - Identifying the most important factors - Reduce the uncertainty in the model response if it is too large (i.e., not acceptable) .xlarge[.bolder[Parameter Fixing]] </br> </br> .xlarge[.bolder[Parameter Mapping]] --- # Why we need SA? .xlarge[.bolder[Parameter Prioritization]] - Identifying the most important factors - Reduce the uncertainty in the model response if it is too large (ie not acceptable) .xlarge[.bolder[Parameter Fixing]] - Identifying the least important factors - Simplify the model if it has too many factors .xlarge[.bolder[Parameter Mapping]] -- - Identify critical regions of the inputs that are responsible for extreme value of the model response --- # Classification of SA Methods .pull-left[ **Local** (One-at-a-time) <img src="http://evelynegroen.github.io/assets/images/fig11local.jpg" height="240px" /> **"Local"** SA focus on sensitivity at a particular set of input parameters, usually using gradients or partial derivatives ] ??? Usually, some people have experience in modeling they have the knowledge in local sensitivity analysis. This method is very simple. You move one parameter and fix other parameters then check the change of model outputs. On the other side, some researcher also developed the approach that moves all parameters at a time and checks the change of model output. We call it Global sensitivity analysis or variance-based sensitivity analysis. -- .pull-right[ **Global** (All-at-a-time) <img src="http://evelynegroen.github.io/assets/images/fig2global.jpg" height="240px" /> **"Global"** SA calculates the contribution from the variety of all model parameters, including .bolder[Single parameter effects] and .bolder[Multiple parameter interactions] ] -- .large[.center[**"Global" sensitivity analysis is good at** .bolder[parameter fixing]]] --- background-image: url(https://image.ibb.co/mGuRK9/kaizen_nguy_n_274069_unsplash.jpg) background-size: contain .footnote[ Img: [Unsplash](https://unsplash.com/photos/jcLcWL8D7AQ) ] ??? Here I want to use an example to explain what sensitivity analysis looks like. You can imagine you’re making a mixing juice to your friend. You can put different fruits in your glass, like apple, orange, lemon, strawberry etc. These fruits are your parameters. But what is your model output? --- background-image: url(https://image.ibb.co/dcgqe9/adult_beard_expression_1054048.jpg) background-size: contain .footnote[ Img: [Pexels](https://www.pexels.com/photo/man-in-black-crew-neck-top-1054048/) ] ??? This is your model output. --- class: center # Sensitivity index .xlarge[First order] `\((S_i)\)` </br> </br> .xlarge[Interaction] `\((S_{ij})\)` </br> .xlarge[Total order] `\((S_{T})\)` </br> </br> ??? This is the quantification of the impact of the model parameter. --- class: center # Sensitivity index .xlarge[First order] `\((S_i)\)` The output variance contributed by the specific parameter `\(x_i\)`, also known as **main effect** .xlarge[Interaction] `\((S_{ij})\)` </br> .xlarge[Total order] `\((S_{T})\)` </br> </br> ??? First order just like how your friend's face looks like when he tastes the specific fruit. --- class: center # Sensitivity index .xlarge[First order] `\((S_i)\)` The output variance contributed by the specific parameter `\(x_i\)`, also known as **main effect** .xlarge[Interaction] `\((S_{ij})\)` The output variance contributed by any pair of input parameter .xlarge[Total order] `\((S_{T})\)` ??? The Interaction just like the mixing flavors from two or more fruits. -- The output variance contributed by the specific parameter and interaction, also known as **total effect** -- <hr/> .left[ .bolder[“Local”] SA usually only addresses first order effects .bolder[“Global”] SA can address total effect that include main effect and interaction ] --- class: middle, center .pull-left[ **Population PK Data**  ] .pull-right[ **Population PBPK Model**  ] .xxlarge[ <i class="fa fa-arrow-up"></i> <i class="fa fa-question"></i> <i class="fa fa-arrow-down"></i> .bolder[**Global Sensitivity Analysis**] ] ??? Now, we have the idea of global sensitivity analysis. But how do we apply GSA with population PBPK modeling? --- # Challenges </br> .xxlarge[ **Many** algorithms have been developed, but we don't have knowledge to identify which one is the best. ] </br> .xxlarge[ **No** suitable reference in parameter fixing for PBPK model. ] --- class: middle # Hypothesis .large[ .bolder[Global Sensitivity Analysis] can provide a systematic method to ascertain which PBPK model parameters have **negligible influence** on model outputs and can be fixed to improve computational speed in Bayesian parameter estimation with **minimal bias**. ] </br> -- .center[.large[ .bolder[Four questions need to be answered...] ]] --- class: center, middle .xxlarge[ .xxxlarge[ .bolder[Time] ] What is the relative computational efficiency of various GSA algorithms? ] --- class: center, middle .xxlarge[ .xxxlarge[ .bolder[Consistency] ] Do different algorithms give consistent results as to direct and indirect parameter sensitivities? ] --- class: center, middle .xxlarge[ .xxxlarge[ .bolder[Performance] ] Can we identify non-influential parameters that can be fixed in a Bayesian PBPK calibration while achieving similar degrees of accuracy and precision? ] --- class: center, middle .xxlarge[ .xxxlarge[ .bolder[Bias] ] Does fixing parameters using expert judgment lead to unintentional imprecision or bias? ] --- # Materials (1/3) - Model .center[ <img src="https://image.ibb.co/e7eccz/JjqgWwg.gif" height="200px" /> ] .bolder[Acetaminophen] (APAP) is a widely used pain reliver and fever reducer. The therapeutic index (ratio of toxic to therapeutic doses) is unusually small. Phase I (.bolder[APAP to NAPQI]) is toxicity pathway at high dose. Phase II (.bolder[APAP to APAP-G & APAP to APAP-S]) is major pathways at therapeutic dose. </br> .center[ .highlight[ A typical pharmacological agent with large amounts of both human and animal data. Well-constructed and studied PBPK model. ] ] ??? I'll move on to our materials, the first one is the PBPK model. In this study, we used acetaminophen, which is a widely used medication. It includes two types of metabolic pathways that can have therapeutic and toxicity effects. But the most important thing is that acetaminophen has a large amount of PK data for both human and other animals. And we also had a well-constructed and studied PBPK model. --- background-image: url(https://image.ibb.co/b9F8Ze/uSCphVE.gif) background-size: 500px background-position: 50% 80% # Materials (1/3) - Model The simulation of the disposition of .bolder[acetaminophen (APAP)] and two of its key metabolites, .bolder[APAP-glucuronide (APAP-G)] and .bolder[APAP-sulfate (APAP-S)], in plasma, urine, and several pharmacologically and toxicologically relevant tissues. .footnote[[Zurlinden, T.J. & Reisfeld, B. Eur J Drug Metab Pharmacokinet (2016) 41: 267. ](https://link.springer.com/article/10.1007%2Fs13318-015-0253-x)] ??? The acetaminophen-PBPK model is constructed with several tissue compartments such as Fat muscle, liver, and kidney. It can be used to simulate and predict the concentration of its two metabolites in plasma and urine. Also, it can be used to describe the different dosing method include oral and intravenous. --- background-image: url(https://image.ibb.co/e7eccz/JjqgWwg.gif) background-size: 300px background-position: 95% 95% # Parameters calibrated in previous study .large[ .large[.bolder[2]] Acetaminophen absorption (Time constant) .highlight[ .large[.bolder[2]] Phase I metabolism: Cytochrome P450 (M-M constant) .large[.bolder[4]] Phase II metabolism: sulfation (M-M constant) .large[.bolder[4]] Phase II metabolism: glucuronidation (M-M constant) .large[.bolder[4]] Active hepatic transporters (M-M constant) ] .large[.bolder[2]] Cofactor synthesis (fraction) .large[.bolder[3]] Clearance (rate constant) ] .footnote[ M-M: Michaelis–Menten ] ??? In the previous study, they only choose 21 parameters to do the model calibration. These parameters can be simply classified into four types, which includes You can see most parameters are Michaelis–Menten constant that determined the metabolism of acetaminophen. --- # Parameters fixed in previous study `$$V_T\frac{dC^j_T}{dt}=Q_T(C^j_A-\frac{C^j_T}{P_{T:blood}})$$` </br> .pull-left[ .large[**Physiological parameters**] 1 cardiac output 6 blood flow rate `\((Q)\)` 8 tissue volume `\((V)\)` ] .pull-right[ .large[**Physicochemical parameters**] 22 partition coefficient for APAP, APAP-glucuronide, and APAP-sulfate `\((P)\)` ] ??? On the other side, we found 37 parameters that were be fixed in the previous study that include cardiac output, blood flow rate, and tissue volume in physiological parameters. In physicochemical parameters, we also tested the sensitivity of partition coefficients acetaminophen and its conjugates. We further used these parameters and gave the uncertainty to do the calibration. --- # Materials (2/3) - Population PK Data .large[ Eight studies (n = 71) with single oral dose and three different given doses. ] .center[ <img src="https://image.ibb.co/mfUD0K/wuYl7Bk.png" height="420px" /> ] ??? Also, experiment data is very important in our model calibration. We used the PK data from eight studies. This data included 71 subjects. All these subjects are administrated with single oral dose. The given dose levels were from 325 mg in group A to 80 mg/kg in group H. --- # Materials (3/3) - Sensitivity Analysis .large[ **Local:** - Morris screening **Global:** - Extended Fourier Amplitude Sensitivity Testing (eFAST) - Jansen's algorithm - Owen's algorithm ] ??? Finally, we used four sensitivity analysis algorithms as candidates and compared the final result with each other. --- # Morris screening - Perform parameter sampling in Latin Hypercube following One-Step-At-A-Time - Can compute the importance `\((\mu^*)\)` and interaction `\((\sigma)\)` of the effects .pull-left[  ] .pull-right[ <img src="180924_seminar_files/figure-html/unnamed-chunk-1-1.png" width="600px" /> ] ??? The first one is the Morris method. Morris can be classified into the local sensitivity analysis. But it is an unusual local approach. Unlike the traditional local method, it can also estimate the interaction in sensitivity index. On the right-hand side is an example of Morris screening. -- .highlight[ **1. It is semi-quantitative – the factors are ranked on an interval scale** **2. It is numerically efficient** **3. Not very good for factor fixing** ] --- # Variance-based sensitivity analysis - eFAST defines a .bolder[search curve] in the input space. - Jansen generates .bolder[TWO] independent random sampling parameter matrices. - Owen combines .bolder[THREE] independent random sampling parameter matrices. .pull-left[ .center[  ] ] .pull-right[ .center[  ] ] ??? The main difference in our global methods is they used the different ways to do parameter sampling. The eFAST method is using the generated search curve and samples the parameters on this curve. Jansen and Owen are used Monte Carlo-based method to sample the parameters in the parameter space. --- # Variance-based sensitivity analysis - eFAST defines a .bolder[search curve] in the input space. - Jansen generates .bolder[TWO] independent random sampling parameter matrices. - Owen combines .bolder[THREE] independent random sampling parameter matrices. .pull-left[  ] .pull-right[ <!-- --> ] ??? On the left-hand side is how the Monte-Carlo sampling. On the right-hand side is an example of variance-based sensitivity analysis' result. -- .highlight[ 1. **Full quantitative** 2. **The computational cost is more expensive** 3. **Good for factor fixing** ] ??? Compare to Morris; the global methods have these characteristics. --- class: center # Workflow .large[Reproduce result from original paper (21 parameters)] <i class="fa fa-arrow-down"></i> .large[Full model calibration (58 parameters)] <i class="fa fa-arrow-down"></i> ??? Our workflow started by reproducing the result from the previous study. -- .highlight[ .large[Sensitivity analysis using different algorithms] **Compare the .bolder[time-cost] for sensitivity index to being stable (Q1)** **Compare the .bolder[consistency] across different algorithms (Q2)** ] -- <i class="fa fa-arrow-down"></i> .highlight[ .large[Bayesian model calibration by SA-judged influential parameters] **Compare the model .bolder[performance] under the setting "cut-off" (Q3)** **Exam the .bolder[bias] for expert and SA-judged parameters (Q4)** ] --- ### Q1: What is the relative computational efficiency of various GSA algorithms? -- .center[ <img src="https://image.ibb.co/mYWr5K/fig1.jpg" height="420px" /> ] Time-spend in SA (min): Morris (2.4) < **eFAST (19.8)** `\(\approx\)` Jansen (19.8) < Owen (59.4) Variation of SA index: Morris (2.3%) < **eFAST (5.3%)** < Jansen (8.0%) < Owen (15.9%) ??? In our result, we’ll answer the four questions. We tested the computer time under the sample number from 1000 to 8000 and checked the variation of sensitivity index. As expected, Morris is the fastest approach that can rapidly compute the result under the same sample number with the lowest variability. But if we focus on the variance-based method, we can find that eFAST shows better performance than Jansen and Owen. --- ### Q1: What is the relative computational efficiency of various GSA algorithms? .center[ <img src="https://image.ibb.co/mYWr5K/fig1.jpg" height="420px" /> ] .center[ .highlight[ **Ans 1: The Morris method provided the most efficient computational performance and convergence result, followed by eFAST.** ] ] ??? The answer to the first question is…. --- ### Q2: Do different algorithms give consistent results as to direct and indirect parameter sensitivities? -- .pull-left[  ] .pull-right[  ] .center[ .xlarge[ .bolder[eFAST] `\(\approx\)` Jansen `\(\approx\)` Owen > Morris ] ] .footnote[Grey: first-order; Red: interaction] ??? We correlated the sensitivity index across four algorithms by using the results of original and all model parameters. The grey and red color are the correlation plot for first-order and interaction. We found that all global methods can provide the consistent sensitivity index. However, Morris cannot produce the similar results with other algorithms. --- ### Q2: Do different algorithms give consistent results as to direct and indirect parameter sensitivities? .pull-left[  ] .pull-right[  ] .center[ .highlight[ **Ans 2: Local and global methods give inconsistent results as to direct and indirect parameter sensitivities.** ]] --- ### Q3: Can we identify non-influential parameters that can be fixed in a Bayesian PBPK model calibration while achieving similar degrees of accuracy and precision? .center[ .pull-left[ Original model parameters (**OMP**) <i class="fa fa-arrow-down"></i> .bolder[Setting cut-off] <i class="fa fa-arrow-down"></i> Original influential parameters (**OIP**) ] .pull-right[ Full model parameters (**FMP**) <i class="fa fa-arrow-down"></i> .bolder[Setting cut-off] <i class="fa fa-arrow-down"></i> Full influential parameters (**FIP**) ] <i class="fa fa-arrow-down"></i> .bolder[Model calibration and validation] ] ??? Moving on to the third question. The crucial factor in this part is how do we set up the cut-off for the sensitivity index that can be used to distinguish the influential and non-influential parameters. Then we can use the selected parameter sets to do the further model calibration. To determine the reliable cut-off, we used both parameter sets from original and the full parameter set. After the sensitivity analysis, we set the cut-off and do the model calibration and validation to see which cut-off can provide the better trade-off between computer time and model performance. --- .center[ <img src="https://image.ibb.co/k8KUnU/fig3.jpg" height="600px" /> ] .footnote[OIP: original influential parameters; OMP: original model parameters; FIP: full influential parameter; FMP: full model parameters] ??? Firstly, we used the cut-off at .05, which means if the parameter can not provide the impact for output variance over 5%, we’ll drop-off this parameter. We found 11 influential parameters and 10 non-influential parameters in this original parameter set. However, when we used the cut-off at .05, we can only screen 10 parameters in the full model set. So we further used the cut-off at .01 to choose additional 10 parameters to do the model calibration. --- .pull-left[ ### The non-influential original parameters .bolder[1] Absorption parameters .bolder[2] Phase I metabolism parameters .bolder[4] Phase II metabolism parameters ### The influential additional parameters .bolder[1] physiological parameter - Cardiac output .bolder[1] chemical-specific transport parameter - Blood:plasma ratio of APAP .bolder[4] partition coefficients ] .pull-right[ </br> </br> </br> </br>  ] ??? Here is the summary of the number of parameters that were be classified to the non-influential parameter in original parameter setting. These parameters are from absorption and metabolism. In addition, we found 6 parameters that were fixed in the previous study. --- ### Q3: Can we identify non-influential parameters that can be fixed in a Bayesian PBPK model calibration while achieving similar degrees of accuracy and precision? .center[ <img src="https://image.ibb.co/g6EU4p/fig3.png" height="500px" /> ] .footnote[OIP: original influential parameters; OMP: original model parameters; FIP: full influential parameter; FMP: full model parameters] ??? We compared the observed human experiment data, and the predicted values then summarized the residual. We can see the full influential parameter with the cut-off at .01 that only used 1/3 of full model parameters can provide the similar result with full parameter sets. Also, using the cut-off at .05 that only used 10 parameters can generate the similar result with original parameter sets. --- ### Q3: Can we identify non-influential parameters that can be fixed in a Bayesian PBPK model calibration while achieving similar degrees of accuracy and precision? .center[  ] ??? We also used the determination coefficient to compare the validation result among each study. You can see the if the given dose over 20 mg/kg. The estimated R2 will be very similar. However, in the lower dose such as group A. We can see the difference estimation of model performance across these parameters sets. But the selected parameters from global sensitivity analysis still have better accuracy and precision with full parameter set. --- ### Q3: Can we identify non-influential parameters that can be fixed in a Bayesian PBPK model calibration while achieving similar degrees of accuracy and precision? - Summary of the time-cost for - All model parameters (this study, 58), - GSA-judged parameters (this study, 20), - Expert-judged parameters ([Zurlinden and Reisfeld, 2016](https://link.springer.com/article/10.1007%2Fs13318-015-0253-x), 21) <table> <thead> <tr> <th style="text-align:left;"> Parameter group </th> <th style="text-align:center;"> Time-cost in calibration (hr) </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> All model parameters </td> <td style="text-align:center;"> 104.6 (0.96) </td> </tr> <tr> <td style="text-align:left;"> GSA-judged parameters </td> <td style="text-align:center;"> 42.1 (0.29) </td> </tr> <tr> <td style="text-align:left;"> Expert-judged parameters </td> <td style="text-align:center;"> 40.8 (0.18) </td> </tr> </tbody> </table> ??? This is the result of the time-cost by using the full parameters, GSA-judged and expert judged parameters. It takes about 4 days to do the model calibration for full parameters, and we can only spend halftime if we fixed the parameter and do the further calibration. -- <br/> .center[ .highlight[ **Ans 3: Restricting the MCMC simulations to the influential parameters with cut-off at 0.01 can reduce computational burden while showing little change in model performance.** ]] --- ### Q4: Does fixing parameters using "expert judgment" lead to unintentional imprecision or bias? -- .center[ <img src="https://image.ibb.co/cmHcKz/fig6.jpg" height="400px" /> ] ??? We examined the distribution for the testing parameter that was fixed in the previous study and determined whether the estimated maximum likelihood is different with the fixed value. In addition, we found when we used cut-off at .01 for full parameter set. It can produce the similar likelihood with full parameter set. -- .center[ .highlight[ **Ans 4: Using "expert judgment" to fix model parameters has the potential lead to imprecision and bias. GSA was more effective at identifying parameters that are influential and led to a better fit between predictions and data.** ]] --- class: middle # Take Home Message For people who have no experience in modeling... .highlight[ - .bolder[Global sensitivity analysis] can be an essential tool to reduce the dimensionality and improve the computational efficiency in Bayesian PBPK model calibration. ] For people who have experience in sensitivity analysis... .highlight[ - Use the .bolder[eFAST] for parameter sensitivity, making sure to check "convergence". - Distinguish “influential” and “non-influential” parameters with .bolder[cut-off] from sensitivity index and check .bolder[accuracy] and .bolder[precision] to evaluate model performance. ] -- .large[ For more details, read this ... ] Hsieh N-H, Reisfeld B, Bois FY and Chiu WA (2018) Applying a Global Sensitivity Analysis Workflow to Improve the Computational Efficiencies in Physiologically-Based Pharmacokinetic Modeling. *Front. Pharmacol.* 9:588. [link](https://www.frontiersin.org/articles/10.3389/fphar.2018.00588/full) .right[] --- # What we might ignore in our study? ??? Now we successfully applied the global sensitivity workflow in our study. But what we might ignore? -- </br> ## - How to applied this GSA workflow to other cases? </br> ## - Which external factors that may change GSA result? </br> ## - How to determine the robust cut-off? --- # Following Work Reproducible research - Software development (R package)  --- class: inverse, center, middle ## Acknowledgements **Supervisor** .large[Weihsueh A. Chiu @TAMU] **Principal investigator** .large[Brad Reisfeld @CSU] **Software & tecnical support** .large[Frederic Y. Bois @INERIS] **PBPK model** .large[Todd Zurlinden @USEPA] <br /> ### Funding by <img src="https://www.fda.gov/ucm/groups/fdagov-public/documents/image/ucm519147.png" height="80px" /> --- # Reference **Model calibration technique** Bois, F. Y. (2009). GNU MCSim: Bayesian statistical inference for SBML-coded systems biology models. Bioinformatics 25, 1453–1454. doi: 10.1093/bioinformatics/btp162 [link](https://doi.org/10.1093/bioinformatics/btp162) **Idea of global SA-PBPK** McNally, K., Cotton, R., and Loizou, G. D. (2011). A workflow for global sensitivity analysis of PBPK models. Front. Pharmacol. 2:31. doi: 10.3389/fphar.2011.00031 [link](https://doi.org/10.3389/fphar.2011.00031) **APAP-PBPK model details** Zurlinden, T. J., and Reisfeld, B. (2016). Physiologically based modeling of the pharmacokinetics of acetaminophen and its major metabolites in humans using a Bayesian population approach. Eur. J. Drug Metab. Pharmacokinet. 41, 267–280. doi: 10.1007/s13318-015-0253-x [link](https://doi.org/10.1007/s13318-015-0253-x) --- class: inverse, center, middle # Thanks! Slides at: [bit.ly/180924_seminar]() </br> question? ??? ENDENDENDENDENDENDENDENDENDENDENDENDENDENDENDENDENDENDENDENDENDEND --- background-image: url(https://image.ibb.co/b9F8Ze/uSCphVE.gif) background-size: 300px background-position: 2% 98% #Absorption .left-column[ ] .right-column[ Use Oral dose `\((D_{oral})\)` and bioavailability `\((F_A)\)` and absorption parameters, we can estimate the amount of APAP absorbed into the blood stream as, `$$\frac{dA_{abs}}{dt}=\frac{F_A\cdot D_{oral}\cdot[exp(\frac{-t}{T_G})-exp(\frac{-t}{T_P})]}{T_G-T_P}$$` .highlight[ `\(T_g\)` : Gastric emptying time constant `\(T_p\)` : Intestinal permeability time constant ] ] --- background-image: url(https://image.ibb.co/e7eccz/JjqgWwg.gif) background-size: 280px background-position: 95% 5% # Metabolism .left-column[ ### Phase I ] .right-column[ Rate of phase I metabolism `\((v_{cyp})\)` can be estimated by concentration of APAP in liver `\((C^{APAP}_{liver})\)` and 2 M-M parameters for APAP-G and APAP-S as, `$$v_{cyp} = \frac{V_{M-cyp}\cdot C^{APAP}_{liver}}{K^{{APAP}}_{M-cyp}+C^{APAP}_{liver}}$$` </br> .highlight[ `\(K^{{APAP}}_{M-cyp}\)` : M-M constant `\(KM\)` for Cytochrome P450 `\(V_{M-cyp}\)` : Maximum conversion rate `\(V_{max}\)` for Cytochrome P450 ] ] --- background-image: url(https://image.ibb.co/e7eccz/JjqgWwg.gif) background-size: 280px background-position: 95% 5% # Metabolism .left-column[ ### Phase I ### Phase II ] .right-column[ The APAP conversion rate `\((v_{conjugate})\)` can be estimated by concentration of APAP and fraction of cofactor in the liver `\((\phi^{cf}_{liver})\)` with 4 Michaelis-Menten parameters for APAP-G and APAP-S as, `$$v_{conjugate} = \frac{V_{M-enz}\cdot C^{APAP}_{liver}\cdot \phi^{cf}_{liver}}{(K^{APAP}_{M-enz}+C^{APAP}_{liver}+\frac{(C^{APAP}_{liver})^2}{K_{I-enz}})(K^{cf}_{M-enz}+\phi^{cf}_{liver})}$$` </br> .highlight[ `\(V_{M-enz}\)` : Enzyme-specific maximum rate of conversion `\(K^{APAP}_{M-enz}\)` : M-M constant for APAP `\(K^{APAP}_{I-enz}\)` : Inhibition constant `\(K^{cf}_{M-enz}\)` : Michaelis-Menten constant for cofactor ] ] --- background-image: url(https://image.ibb.co/e7eccz/JjqgWwg.gif) background-size: 280px background-position: 95% 5% # Cofactor synthesis .left-column[ ] .right-column[ The fraction of cofactor in the liver `\((A^{cf}_{liver})\)` can be estimated by APAP conversion rate and a rate parameter as, `$$\frac{d\phi^{cf}_{liver}}{dt}=-v_{conjugate}+k_{syn-cf}(1-\phi^{cf}_{liver})$$` </br> .highlight[ `\(k_{syn-cf}\)` : rate of synthesis of the cofactor ] ] --- background-image: url(https://image.ibb.co/b9F8Ze/uSCphVE.gif) background-size: 300px background-position: 2% 98% # Active hepatic transporters .left-column[ ] .right-column[ Rate of hepatic transport `\((v^{conj}_{mem})\)` can be estimated by 2 M-M parameters for APAP-G and APAP-S as, `$$v^{conj}_{mem} = \frac{V^{conj}_{M-mem}\cdot C^{conj}_{hep}}{K^{{conj}}_{M-mem}+C^{conj}_{hep}}$$` .highlight[ `\(V^{conj}_{M-mem}\)` : Maximum transport rate `\(K^{{conj}}_{M-mem}\)` : Concentration of chemical achieve half Vmax ] ] --- background-image: url(https://image.ibb.co/b9F8Ze/uSCphVE.gif) background-size: 300px background-position: 2% 98% # Clearance .left-column[ ] .right-column[ The clearance rate can be used to estimate the amount of kidney elimination `\((A^j_{KE})\)` `$$\frac{dA^j_{KE}}{dt}=k^j_{R0}\cdot BW \cdot C^j_A$$` </br> .highlight[ `\(k^j_{R0}\)` : rate of renal clearance of the chemical `\(j\)` (represents APAP, APAP-G, and APAP-S) ] ]